AI agents. Built for hiring.

Multiply your capacity across screening, interviews, assessments, and reference checks.

Most recruiting teams have already touched AI in some form. Maybe someone's been using ChatGPT to draft job descriptions, or there's a tool quietly running in the background for resume screening. But there's a big difference between experimenting with AI and actually building it into how your team operates.

That gap, between scattered usage and a real system, is where most TA teams are stuck right now.

In a recent webinar, we broke down what it actually takes to close that gap: how to figure out where AI fits in your workflow, what tools to consider (and what to watch out for), how to handle the compliance questions that will inevitably come up, and how to bring your team along without losing people in the process. Here's what came out of it.

Being AI-native doesn't mean replacing recruiters with bots. It means AI is embedded into how your team works, not as a party trick or a one-off productivity hack, but as a consistent layer of decision support across sourcing, screening, and hiring.

Most teams aren't there yet. The data shows a fairly predictable distribution: a lot of teams are using one or two AI tools somewhat regularly, a decent chunk are still in early experimentation mode, and a smaller group has genuinely integrated AI into their core workflows. The gap between the first two groups and the third isn't really about technology, it's about intention and structure.

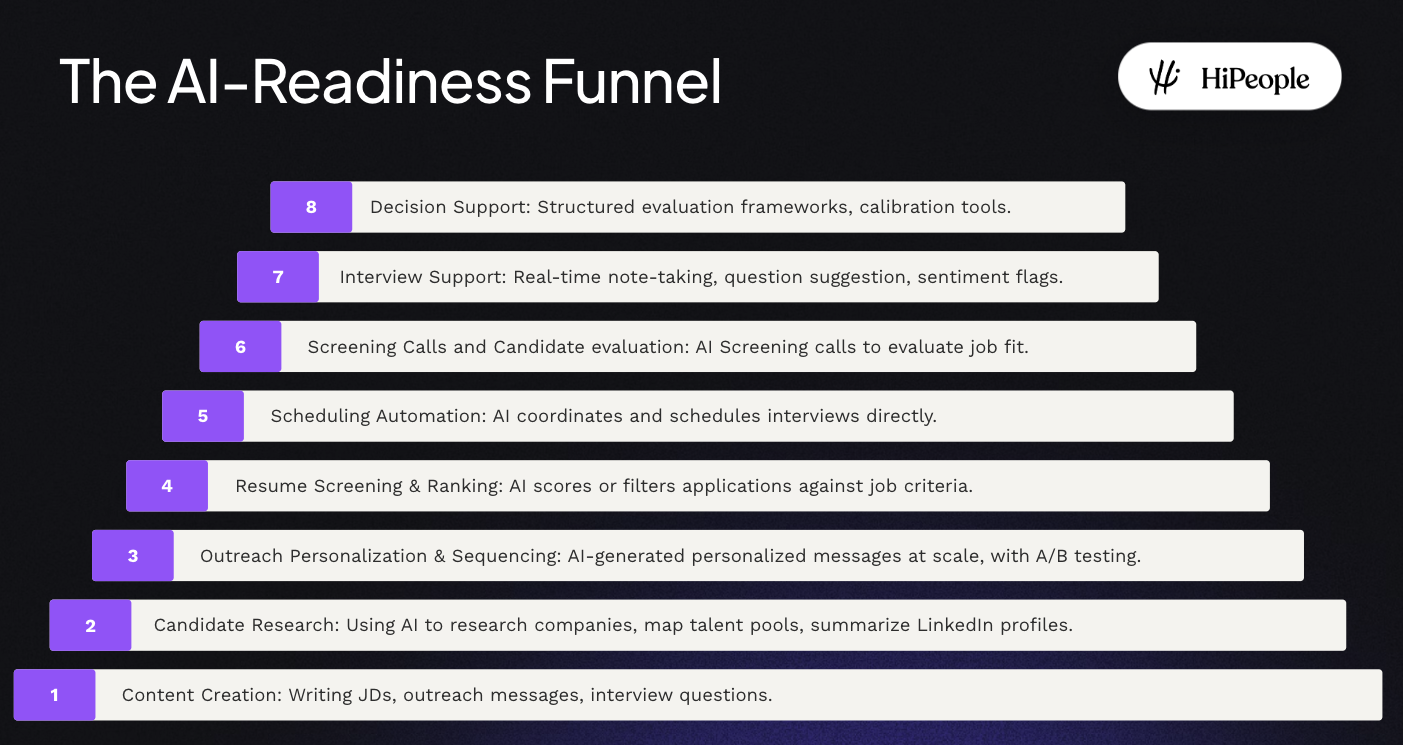

AI adoption in recruiting tends to follow a natural arc. It usually starts with content tasks, drafting job descriptions, writing outreach sequences, because the feedback loop is fast and the risk is low. From there, teams start building it into heavier-lift workflows: screening applications, scoring candidates, automating scheduling. The most advanced teams use AI to support interviews and hiring decisions, surfacing insights rather than making calls.

The key word is support. The most effective AI implementations keep a human clearly in the loop. More on why that matters in a minute.

Before you buy anything or build anything, it's worth doing a structured assessment of where AI can actually move the needle for your team specifically. The goal isn't to map out every possible use case — it's to identify what's eating the most recruiter time right now and prioritize from there.

A simple way to think about it: run through your core recruiting workflow step by step and ask, "What's the most painful, repetitive thing that happens at each stage?"

The workflow tends to break down into roughly eight areas:

Content creation is the natural starting point: job descriptions, outreach copy, follow-up emails. These are high-volume, repeatable tasks where AI can meaningfully speed things up without introducing much risk.

Sourcing and candidate research is where AI can help identify talent pools, surface lookalike candidates, and enrich data, especially useful for outbound-driven hiring motions.

Outreach personalization takes the raw sourcing work and turns it into actual candidate engagement, through personalized sequences and messaging that doesn't read like a mail merge.

Applicant screening (resume review, ranking, initial shortlisting) is consistently where teams report spending the most time. It's also one of the clearest opportunities to return hours to recruiters for higher-value work.

Scheduling is unglamorous but genuinely painful. Every back-and-forth email thread to find a time is a small tax on recruiter capacity. Automation here pays dividends quickly.

Screening calls are an area of growing interest, using AI to collect initial candidate information before a human reviews the conversation. The vendor landscape here requires careful due diligence (more below).

Interview support is evolving beyond simple transcription. The better tools now generate contextual insights from interview conversations, giving interviewers more to work with.

Decision support is the most advanced and also the most nuanced, using everything surfaced earlier in the process to give a clearer picture of candidate fit. This is still a human decision. It always should be.

If you cluster these by what you can realistically act on right now, it shakes out into three buckets: quick wins (content, research, outreach), building blocks (screening, scheduling, screening calls), and advanced steps (interview support, decision support). Most teams should start with quick wins to build momentum, then tackle the building blocks that will free up the most time.

There's no universal AI stack for recruiting. What works depends on your market, your hiring volumes, how complex your roles are, and what you're already running. That said, here's how to think through the major categories.

For content and job descriptions, the goal is speed and consistency, tools or infrastructure that let anyone on the team draft a solid, company-specific JD quickly, without starting from scratch every time.

For sourcing, look for platforms that go beyond basic database search: ATS/CRM integrations with genuine sourcing capabilities, or dedicated tools that use AI to proactively surface candidates for outbound pipelines.

For screening, your ATS may already offer more than you're using. Before buying a dedicated tool, audit what your current system can do. The jump from "open applications" to "reviewed shortlist" is where a lot of time disappears.

For scheduling, dedicated tools exist, and many modern ATS platforms include it natively. This is usually a fast win with a relatively low evaluation burden.

For screening calls, take your time. This is a space with real regulatory complexity, and several vendors have run into compliance issues. Don't let a vendor's sales pitch move faster than your legal team's review.

For interview transcription and support, purpose-built tools are worth evaluating alongside whatever your ATS offers. The quality of the insights matters — look for contextual summaries, not just transcripts.

AI in recruiting touches employment law, data privacy, and anti-discrimination regulation and those rules vary significantly depending on where you're hiring. Europe has stricter frameworks than most US states. Some US cities have their own requirements layered on top of federal rules. "We're not sure what applies to us" is not a safe starting position.

The practical recommendation: before you evaluate any vendor, get clear on the regulatory landscape for your key hiring markets. Then build a working relationship with your legal team (or outside counsel) early. They'll need to be involved anyway, better to bring them in at the start than to have them pump the brakes later.

When evaluating vendors, there are four things that should be non-negotiable:

A human must stay in the loop. This isn't just an ethical preference, it's increasingly a regulatory requirement in multiple jurisdictions. Any tool that makes autonomous decisions about candidates should be a hard no.

Automated decision-making is off the table. Tools that output a "hire/reject" are both legally risky and genuinely hard to defend internally. They also tend to fail compliance reviews.

Transparency matters. You should be able to explain to a hiring manager (or a regulator) exactly how a score or ranking was produced. If a vendor can't give you a clear answer, that's a red flag.

Audit readiness is required. Can the vendor demonstrate compliance with the regulations that apply to your markets? Ask for documentation. If they can't provide it, keep looking.

Here's something that doesn't get talked about enough: you can build a great AI workflow, select the right tools, and still watch adoption stall because the team isn't bought in.

There's a real psychological tension that shows up in almost every TA team going through this. People can genuinely believe AI creates value for the business while still feeling anxious about what it means for their own job. Both things can be true at the same time and pretending the anxiety isn't there usually makes it worse.

A few patterns tend to come up repeatedly in teams resisting AI adoption:

"This is going to replace me." The honest response: AI takes over tasks, not jobs. But recruiters who learn to work with AI will have a significant advantage over those who don't. The question isn't whether to engage with AI — it's whether to do it now or later.

"I don't trust the output." This is actually reasonable, and the answer isn't to argue. Trust is built incrementally, through low-stakes experiments where people can see the output, evaluate it, and build their own confidence over time. Start small.

"I don't want another tool to learn." This objection is often really about cognitive overload. The answer is to think in terms of workflow redesign, not tool addition. AI should simplify how work gets done, not add new steps.

There's a five-step approach that works reasonably well for managing this change:

Start with one use case and make it land well. Pick something that will generate visible ROI quickly — ideally within two weeks. Momentum matters.

Find your AI champions early. Every team has a few early adopters who are genuinely excited. Give them visibility and let them pull others in. Adoption that looks peer-driven feels less threatening than adoption that comes from the top.

Make it safe to experiment and fail. If people think a mistake will reflect badly on them, they'll avoid trying. Build psychological safety into how you talk about the transition.

Celebrate the wins publicly. When someone saves 5 hours on screening in a week, say so in your team meeting. Make the value tangible and visible.

Bring skeptics in as testers. Give your most vocal critics a role in evaluating tools rather than leaving them on the sidelines. People who feel heard are less likely to become active blockers.

On the practical side: tool overload and budget constraints are the two blockers teams mention most. If you're locked into long SaaS contracts for non-AI tooling, you may not have the budget to add a full new stack. One approach that works is starting very small, a limited pilot with a constrained budget, and using the results to build an internal case for broader investment. Vendors are often willing to work with this, especially if they see a path to expansion.

The 90-day frame is useful not because everything has to be done in 90 days, but because it forces prioritization. The goal is to get from "we're thinking about this" to "this is actually running" in a time horizon that's short enough to maintain urgency.

The shape of 90 days tends to look like this: spend the first stretch on diagnosis — needs analysis, stakeholder alignment, compliance groundwork. Use the middle stretch to run your first pilots and work out the kinks in your change management approach. Use the final stretch to consolidate what's working, roll it out more broadly, and establish the measurement framework you'll use going forward.

The exact breakdown depends on where you're starting from. But the principle holds: don't try to boil the ocean. Pick the highest-impact use case, make it work, prove the value, then expand.

The teams that successfully become AI-native aren't necessarily the ones with the best tools or the biggest budgets. They're the ones that approach AI as a change management challenge as much as a technology challenge.

That means bringing legal in early, not late. It means building psychological safety before you ask people to change how they work. It means keeping humans genuinely in the loop — not as a compliance formality but as a genuine commitment to the kind of recruiting your team stands behind.

The framing that tends to work best: AI isn't replacing what great recruiting looks like. It's giving your team more capacity to actually do it.